Sometimes you have a day when two things converge.

This morning I read The f*** off contact page by Nic Chan (introduced to me by BrianKrebs on Mastodon, who got it via Hackernews).

Nic describes how often the contact page on websites seem to be deliberately designed to get rid of you – they don’t want to talk to you.

Nic’s post is a good read – and outlines how this often happens.

My own issue

Later that day I decided to finally get around to reporting an issue I had with my Oura ring – it really wasn’t holding a charge – nowhere near the several day battery life I was getting previously.

It’s still under warranty and I’m pretty sure that it needs replacing – but I’d been putting this off as I dreaded the hoops I’d have to jump through:

- Try our chat bot

- Have you read our FAQ?

- Please do this convoluted process to try to reset everything

- Did I mention our FAQ?

- Oh, you want to talk to someone? How about our bot?

My heart sank…

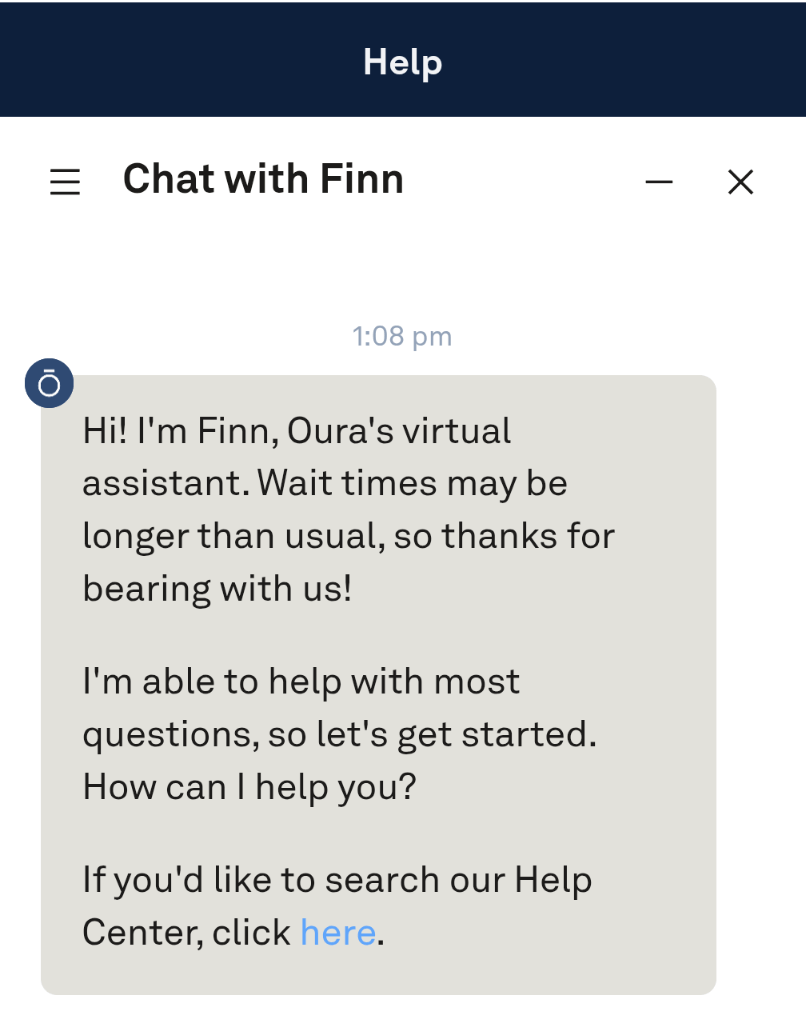

I went into the Oura app, clicked “Help”, and was presented with:

Great, a chat bot.

Now I love a bit of AI – but most of the time these chat bots are useless. They aren’t wired up to anything, they aren’t empowered to do anything – they are designed to prevent you from getting anything done.

Or they send you round in circles telling you that you can do things that you can’t (looking at you Amazon).

Worse than a f*** off contact page is a f*** off bot.

Oura’s chat bot…

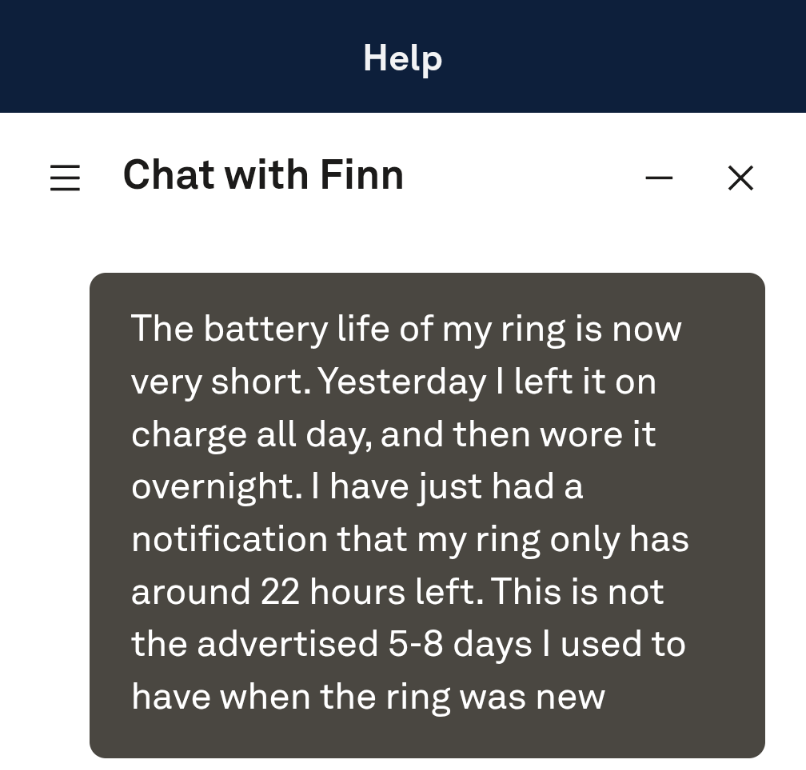

I explained my issue, not expecting much:

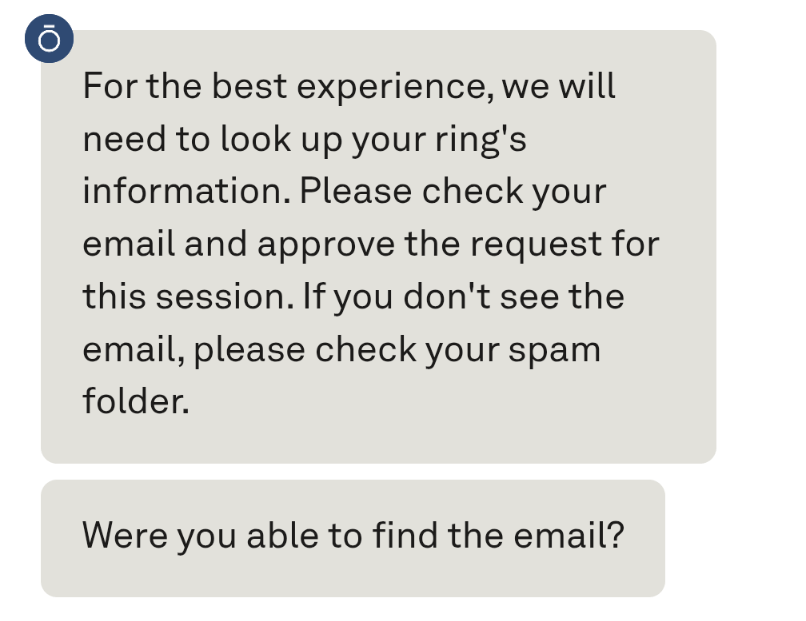

Finn then asked if it could access my account:

…the bot is actually useful

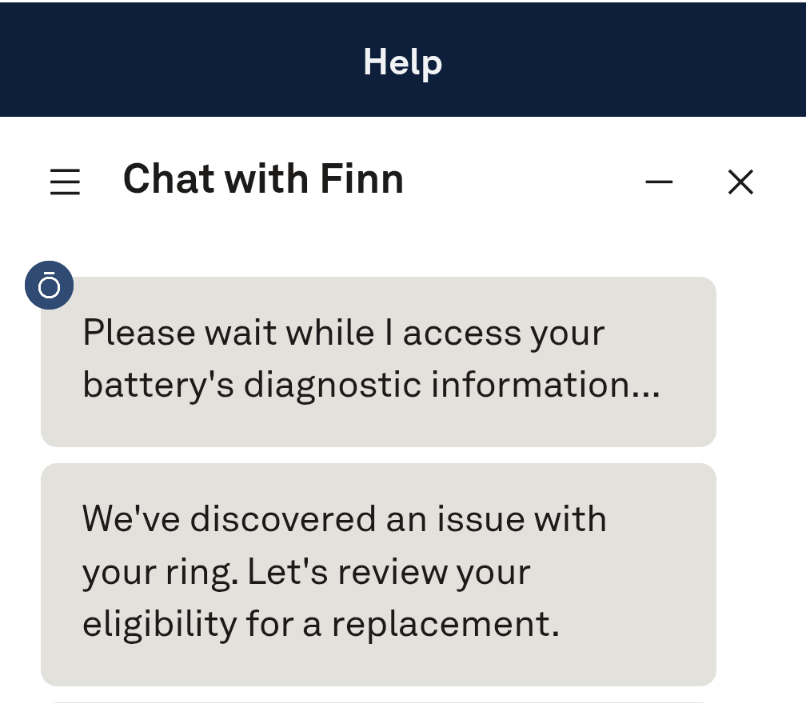

After clicking the email and confirming the chatbot did something I really didn’t expect – it checked my account, grabbed diagnostics, and confirmed that there was an issue!

It immediately went down the “warranty replacement” route – within minutes of me starting the chat.

After confirming my details Finn then handed me off to a human:

From chatbot to human

I expected a “gotcha” here – I the human to intervene and then ask me to supply extra information.

But no – just one final confirmation from me, and then a confirmation that a replacement ring was on order:

This is how AI and bots should be implemented

This is a great example of when tech can be implemented in a way that is actually better than what went before.

Previously frustrated customer would contact company. Company wants to make sure that customer isn’t trying to scam them or get something they are not entitled to. This conversation becomes adversarial very quickly – and everyone involved gets frustrated.

Often the answer to “our helpdesk is inundated with emails from frustrated customers and staff stress levels are sky high” focuses on the “stop the emails” part of the equation.

Easy – pop in a chat-bot with a sprinkling of LLM AI goodness – now it’s all “self-serve” and the number of emails goes down.

But what about the frustrated customers?

Oura have done it differently

If the eventual outcome of a failed bit of hardware is a warranty replacement – why can’t we have an AI bot that gets both the customer and staff to the inevitable end point quicker?

If you have the capability of verifying the customer’s claim that things are not right – why not empower the chat-bot to do that?

Not endless loops of “have you tried this?”, or “have you read the FAQ?”.

By all means have human-in-the-loop if things don’t quite match up(I suspect that was the case with me – my postal address had changed) – but having a bot pre-clear and verify things not only makes things easier for the customer, it’s easier for the staff as well.

As an Oura customer I very quickly went from “I like the product, but there’s a warranty issue, this will be a pain to sort” to “oh, that was easy”.

Well done Oura. Rest of the class please take note!

Leave a comment